Every week, a mid-size company somewhere signs a contract for an AI platform before anyone has agreed on what problem it’s supposed to solve. This article is the antidote to that.

The meeting that starts every failed AI project

You know the meeting. A board member returns from a conference, or reads a competitor’s press release, and the question lands in your lap: “What’s our AI strategy?” Within two weeks there’s a budget line, a vendor shortlist, and a project team assembled from people who have other jobs to do. Everyone is enthusiastic. Nobody has written down what success looks like. And six months later, when the pilot has quietly stalled and the vendor relationship has cooled, the consensus is that AI “wasn’t the right fit” — when the truth is that the company started in the wrong place.

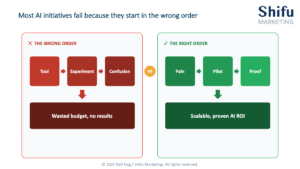

This is not a technology failure. It’s a sequencing failure. And it happens constantly, across industries and company sizes, because the pressure to act on AI has outpaced the frameworks for acting on it well.

The good news: starting well is simpler than the hype suggests. But only if you respect one rule about the order of operations.

The 3P Model: the only framework you need

After working through AI adoption with companies across Europe and the US, a pattern emerges so consistently that it has become a working framework: every successful AI initiative moves through three stages in exactly this order.

Pain → Pilot → Proof

That’s it. Three words. But the sequence matters more than any tool, any platform, or any vendor promise — because companies that skip directly to Pilot without a clearly defined Pain almost always fail to reach Proof. The framework sounds obvious. In practice, it’s violated in roughly eight out of ten AI projects we encounter.

Stage 1 — Pain: start with the problem, not the technology

The most common entry point for AI adoption is the wrong one: a tool that looked impressive in a demo. The right entry point is a problem that costs your business real money, time, or accuracy every week — and that happens to be solvable with AI.

The diagnostic question is simple: does this pain point involve repetitive, data-driven work where the pattern is consistent and the stakes of a single error are manageable? If yes, you have an AI candidate. If the problem requires nuanced human judgment, complex relationship dynamics, or contextual creativity that changes with every instance — it’s not where you start.

Strong first-use cases for mid-size B2B companies tend to cluster in the same areas: invoice classification and routing, customer support ticket triage, meeting summary and action-item extraction, sales proposal first drafts, internal knowledge search. These are not glamorous. They are also where companies consistently see a 40–70% reduction in manual processing time within the first 90 days — because the problem is well-defined, the data exists, and the quality bar is clear.

The leadership team at a mid-size logistics company we worked with spent three months debating an AI strategy before we asked them one question: what is the single most time-consuming manual task in your operations? The answer was journey time reporting — a process that took two analysts four days per month to compile from disparate systems. That became the first pilot. Six weeks to build, four weeks running in parallel with the manual process, and the same output delivered in under two hours. That result — specific, measurable, operationally real — became the proof point that unlocked budget and buy-in for everything that followed.

Stage 2 — Pilot: one problem, one team, thirty days

The word “pilot” gets stretched into multi-month, multi-team explorations that lose their shape and their mandate. A proper pilot is smaller than most people think it should be, and more tightly defined: one use case, one team of willing participants, one tool, a 30-day window, and one success metric written down before day one.

That metric should be specific enough to be unambiguous. Not “improve efficiency” — but “reduce the time to produce the weekly pipeline report from four hours to under one hour.” Not “support customers better” — but “resolve at least 40% of tier-one support tickets without human escalation.” The specificity is not bureaucratic; it’s what allows you to make an actual decision at the end of the pilot rather than drifting into perpetual evaluation mode.

In most cases, the pilot sits inside a tool your team already uses — integrated into your CRM, your helpdesk platform, or a workflow tool like Teams or Slack — rather than requiring a standalone new system. The less your team has to change their environment, the higher the adoption rate during the test window. Build vs. buy decisions come later; at the pilot stage, the question is simply: does this work well enough to be worth scaling?

Three prerequisites before the pilot starts. An executive sponsor who will clear obstacles and visibly signal that this matters — active ownership, not passive support. Basic data hygiene in the specific domain you’re working in, meaning the data feeding your use case is accurate, accessible, and consistent enough to be useful. And a change management plan — at the pilot stage, this is modest: brief the team honestly, explain what will and won’t change, and make it easy to flag problems early.

Stage 3 — Proof: make a decision, not a hypothesis

The purpose of a pilot is a decision. Not a report, not a recommendation to explore further — a binary outcome: this worked and we are scaling it, or this didn’t work and here is exactly what we learned.

This is where most companies stall. The pilot produces ambiguous results, nobody wants to declare failure, and the initiative drifts into a second evaluation phase that gradually loses energy and budget. The antidote is to define “done” before you start: what result, measured how, over what time window, constitutes proof that this is worth scaling?

When the proof is clear — and it usually is, if Pain and Pilot were done correctly — the conversation changes entirely. You are no longer selling AI to the organisation on the basis of potential. You are presenting evidence. That shift, from hypothesis to proof, is what unlocks the organisational confidence to move from one use case to a second, a third, and eventually a coherent AI programme built on a foundation of things that have actually worked.

The three conditions that separate success from stall

Frameworks are only as useful as the conditions that support them. Three factors consistently determine whether an AI initiative reaches Proof or stalls in Pilot.

Executive sponsorship with teeth. Not a supportive email — a named leader at C-suite level who attends the pilot review, removes blockers when they appear, and connects the initiative to a business outcome they personally own. AI projects without a real sponsor die quietly and are written off as technology failures.

Data that is good enough, not perfect. One of the most persistent myths in AI adoption is that you need clean, well-governed, centrally structured data before you can start. What you need is data that is good enough for the specific use case you have chosen. The companies that wait for perfect data conditions are still waiting.

A definition of success written before day one. This sounds procedural. It is actually cultural. Writing down what success looks like, sharing it with the team, and holding to it at the pilot review is how you distinguish genuine learning from motivated reasoning — and how you protect the team that ran the pilot when results are mixed.

When to bring in external expertise

You do not need a consultant to start with AI. But external expertise accelerates the process significantly in specific situations: when you lack internal AI experience and the consequences of a poorly designed first pilot are high; when internal capacity constraints mean the initiative will sit deprioritised for months without external momentum; when you need an objective view of which use cases are genuinely high-value versus which are being proposed because someone has a vendor relationship; or when a previous pilot has failed and the internal diagnosis of why is unclear.

The right external partner does not create dependency. They are there to transfer a working method and hand over the process to internal owners. If an external partner is still indispensable to your AI programme after 12 months, something has gone wrong.

The starting point is closer than you think

AI adoption rarely fails because the technology is too difficult. It fails because organisations start in the wrong place — tool before problem, ambition before metric, company-wide rollout before a single proven result.

The companies that move fastest are almost never the ones with the most sophisticated infrastructure or the largest budgets. They are the ones that respected the sequence. And the use case that becomes their first proof point is almost always sitting inside a process someone in their business complained about in the last month.

The hardest part of starting with AI is identifying the right first use case. That’s exactly what an AI Readiness Assessment is designed to do — a structured 30-minute conversation to identify your highest-value starting point.

AI Implementation FAQ

1. Where should a company start with AI?

Companies should start with a clearly defined business problem, not a technology. The most effective framework is Pain → Pilot → Proof: identify the most expensive manual process, run a 30-day pilot with one tool and one metric, then make a binary scale-or-stop decision. Companies that skip the Pain stage rarely reach a measurable result.

2. How long does it take to implement AI in a business?

A first AI pilot typically takes 30 days to run, within a 90-day starting framework. The first month covers problem identification and data assessment. Month two runs the pilot. Month three evaluates results and makes a scaling decision. Meaningful ROI from a first use case is typically measurable within 60–90 days.

3. What are the most common reasons AI projects fail?

AI projects most commonly fail because companies choose a tool before defining a problem — a sequencing error that occurs in roughly eight out of ten first initiatives. Secondary causes include no executive sponsor, poorly defined success metrics, and attempting company-wide rollout before a single proven result exists.

4. What is a good first AI use case for a mid-size company?

Strong first AI use cases for mid-size B2B companies include invoice classification, customer support ticket triage, meeting summary automation, sales proposal drafting, and internal knowledge search. These share common traits: repetitive, data-driven work with consistent patterns and manageable error stakes.

5. Do you need clean data to start with AI?

Companies do not need perfect data to start with AI. They need data that is good enough for the specific use case chosen — accurate, accessible, and reasonably consistent within that domain. Waiting for perfect data conditions is the second most common reason companies delay AI adoption unnecessarily.